Interactive BLEU

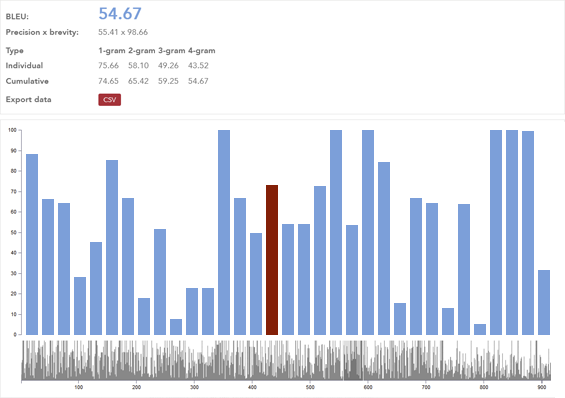

With the Interactive BLEU tool, users can see their system evaluation results in a dynamic, sentence-by-sentence graph, or compare two systems to see which performs better.

This enables users to evaluate MT quality for each sentence individually, providing deeper insight into the performance of MT engines.

How you can benefit from Interactive BLEU

The Interactive BLEU allows users to "peek under the hood" of their MT systems, assessing quality on a very granular level.

Translators can use the tool to both evaluate the quality of their MT system and perform a comparative evaluation of MT files.

To evaluate your MT system quality:

- Go to the MT system page

- Choose your MT system

- Open "Details"

- Expand the Evaluation step

- Click on the BLEU score

- See the full evaluation

To perform a comparative evaluation of MT files:

- Go to the Interactive BLEU score evaluator page

- Upload a file in the source language

- Upload a human translated file in the target language

- Upload one or two machine translated files

- Click on the "Score" button to perform a comparative evaluation

- See the full evaluation

Interactive BLEU score evaluator

Interactive BLEU is easy to use and performs quick analysis on your MT system.

Use evaluator